The 5 Best Models to Use with AI Agents in April 2026

A selection of five models worth trying with OpenClaw and the Hermes Agent.

Open-source AI agents like OpenClaw and Hermes Agent aren’t necessarily cheaper than Claude, Cursor, Perplexity Computer, or other enterprise-grade agents.

In fact, unless you’re planning to run local models, the costs can add up quickly.

For example, if you connect your agents to Claude Opus 4.6 or Gemini 3.1 Pro, you could easily end up spending $100 or more per day, depending on your use case and goals.

I’ve experienced this firsthand. I spent over $150 in credits building an AI-agent workflow for a newsletter. You can learn more about it here:

The reason I wrote this listicle is to help you avoid spending as many credits as I did. There’s no need to overspend unless your goal requires extreme reasoning capabilities.

Now, let’s take a look at the top five Large Language Models (LLMs) for AI agents in April 2026.

1. MiniMax M2.7: The Self-Improving Agent

MiniMax M2.7 is probably the most fascinating model on this list. Due to its ability to participate in its own improvement loop, a property MiniMax calls “self-evolution.”

During development, M2.7 executed over 100 autonomous optimization cycles on the OpenClaw framework, getting a 30% performance gain without human intervention.

MiniMax reports 56.22% on SWE-Pro, 55.6% on VIBE-Pro, and 57.0% on Terminal Bench 2. Despite activating only 10 billion parameters (a fraction of its 230 billion total). M2.7 competes with models that are much higher in size.

This model is also fast and shines in end-to-end software engineering. It can take a project from concept to delivery, troubleshoot production issues, and collaborate across multi-agent setups.

Specifications:

Provider: MiniMax (Shanghai-based AI lab)

Release Date: March 18, 2026

Ideal use cases: Autonomous software engineering, multi-agent, and orchestration pipelines.

Limitations: Text-only model.

Architecture: 230B total parameters, 10B activated (Mixture of Experts)

Context Window: ~205,000 tokens

Pricing: $0.30/1M input tokens, $1.20/1M output tokens (~$0.06 blended with cache)

2. Qwen3.6-Plus: Alibaba’s Million-Token Agent

Alibaba released this model with a full 1 million token context window, meaning you can feed it entire codebases, thousands of pages of legal documents, or hours of meeting transcripts in a single prompt.

The architecture is a hybrid of advanced linear attention and sparse MoE routing, with roughly 80B total parameters and just 3B active in some configurations. Or, in the full Plus variant, 480B total with 35B active.

Where Qwen3.6-Plus truly distinguishes itself is agentic coding. On SWE-Bench Verified, it scores 78.8%. It excels at repository-level engineering, meaning it can navigate entire codebases, resolve complex bugs, handle frontend development, and support terminal operations for full-stack workflows. Which is pretty much what you need with OpenClaw and Hermes.

Beyond coding, it’s a strong general-purpose agent: long-horizon planning, tool-calling, STEM reasoning, and instruction following are all areas where it dominates according to Alibaba’s benchmarks. It supports multilingual adaptation across 100+ languages and is deeply integrated into Alibaba Cloud Model Studio and the Qwen App ecosystem.

Specifications:

Provider: Alibaba / Qwen (Hangzhou, China)

Release Date: April 2, 2026

Ideal use cases: Repository-scale code repair and generation, long-document analysis (legal, medical, financial), multilingual enterprise automation, agentic planning workflows with extensive tool use.

Limitations: No native audio processing.

Architecture: 480B total parameters, 35B active (8 of 160 MoE experts)

Context Window: 1,000,000 tokens

Pricing: Currently free in preview via OpenRouter

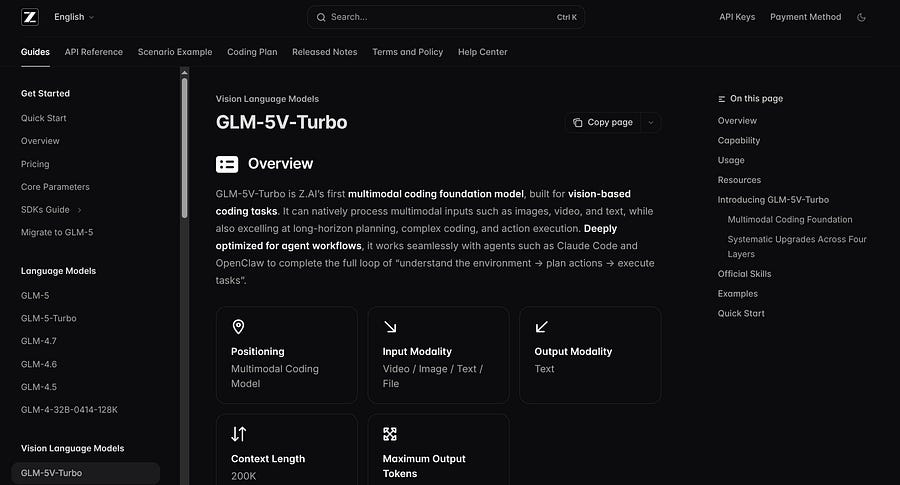

3. GLM 5V-Turbo: Zhipu AI’s Vision-to-Code Model

GLM 5V-Turbo is a multimodal coding foundation model, designed for vision-based coding and agentic workflows. You can feed it a screenshot or a design mockup, and it will generate production-ready frontend code in return. Z.AI claims it is deeply optimized for tools such as Claude Code and OpenClaw.

On Design2Code, a benchmark for turning visual designs into functional code, GLM-5V-Turbo scores 94.8, while Claude Opus 4.6 scores 77.3. That is a large gap. Z.AI also reports leading or strong results on GUI navigation benchmarks like AndroidWorld and WebVoyager.

For pure text coding, GLM-5 remains highly competitive: it scores 77.8% on SWE-Bench Verified, close to Claude Opus 4.5’s 80.9% in the public comparison.

Specifications:

Provider: Zhipu AI (Z.ai) / Tsinghua University

Release Date: April 1, 2026

Ideal use cases: Design-to-code pipelines (Figma/screenshots to HTML/React), GUI automation and testing, multimodal document analysis, and agentic workflows requiring visual grounding

Limitations: Multimodal focus means it may be over-engineered for text-only tasks.

Architecture: 744B total parameters, 40B active (MoE), CogViT vision encoder

Context Window: ~200,000–203,000 tokens

Pricing: $1.20/1M input tokens, $4.00/1M output tokens

4. Gemma 4: Google’s Best Local Model

Gemma 4 from Google DeepMind is here to democratize AI development for millions of users. Released under an Apache 2.0 license, the entire family can be downloaded, modified, and deployed commercially with zero API fees.

The range is remarkable: E2B (~2B parameters) runs on a Raspberry Pi or an NVIDIA Jetson Orin Nano. E4B fits comfortably on mid-range smartphones. The 26B MoE variant activates only ~4 billion parameters while maintaining the capacity of a much larger model.

The 31B model scores 80% on LiveCodeBench, 85.7% on GPQA Diamond, 88.4% on MMMLU (a multimodal variant), and a knowledge category average of 61.3%. The 26B MoE model hits 77.1% on LiveCodeBench and 82.6% on MMLU-Pro. For context, these are scores that models with 10–20x the parameters were posting just a year ago.

The E2B and E4B models include native audio input, and all variants support built-in thinking mode (step-by-step reasoning), function calling for agent workflows, and multilingual support across 140+ languages.

Specifications:

Provider: Google DeepMind

Release Date: April 2, 2026

Architecture: Four variants. E2B (2B), E4B (4B), 26B MoE (~4B active), 31B Dense

Ideal use cases: Offline and edge AI deployments, privacy-sensitive applications, custom fine-tuning for specialized domains, mobile/IoT AI, developer tooling, and local code assistants.

Limitations: The smaller models (E2B/E4B) are ok for lightweight tasks but not replacements for frontier models on complex reasoning. The 31B model, while impressive, still falls behind Gemini 3.1 Pro and Qwen3.6-Plus. No native audio on the larger models (31B and 26B).

Context Window: 128K (E2B/E4B), 256K (26B/31B)

License: Apache 2.0

Pricing: Free (open weights)

5. Claude Opus 4.6: The King of Reasoning

Claude Opus 4.6 is Anthropic’s flagship reasoning model, optimized for complex agentic workflows and planning. It powers advanced multi-agent systems and excels at maintaining coherence across extended interactions.

The architecture balances massive scale with practical deployment: a 1.4T parameter MoE design activates ~120B parameters per token.

Claude Opus 4.6 leads most agentic coding benchmarks with 82.3% on SWE-Bench Verified, 94.2% on TerminalBench, and 78.6% on SWE-Pro. It also scores 89.4% on GPQA Diamond and 87.2% on AIME 2026, positioning it among the top 3 overall models.

All variants include constitutional AI safety guardrails, native multimodal reasoning (text+image), and “thinking mode” for step-by-step chain-of-thought. The model shines in repository-scale codebases, production debugging, and enterprise automation requiring strict instruction adherence.

Specifications:

Provider: Anthropic

Release Date: March 12, 2026

Architecture: 1.4T MoE (120B active), hybrid dense+sparse attention

Ideal use cases: Enterprise agent orchestration, repository-scale engineering, compliance-heavy automation, multi-agent collaboration, production system debugging

Limitations: Higher latency than smaller specialist models. Very expensive.

Context Window: 1M tokens

Pricing: $5/1M input, $25/1M output (~$8 blended)

Conclusion

The five LLMs presented in this piece are likely the best ones to power your AI agents at the time of writing.

In the end, it’s all about cost and capability. You could use Claude Opus 4.6 in all your tasks, and you would have OpenClaw or Hermes Agent running like a breeze (even if it is not the fastest model). But you would be burning credits like a madman, even if you’re not doing much.

You don’t need to spend $100 a day or more. Here is a quick cheat sheet to help you choose:

MiniMax M2.7: Choose this if you want an autonomous, self-improving pipeline. It keeps your API bills incredibly low.

Qwen3.6-Plus: If you need to dump an entire codebase, enterprise repository, or a mountain of transcripts into your agent’s memory. Also free and tailored for AI agents.

GLM 5V-Turbo: The undisputed choice for vision-based tasks. If your workflow involves turning screenshots, Figma mockups, or GUI navigation into functional frontend code, look no further.

Gemma 4: Use it if privacy is a requirement or you want to run offline edge deployments with absolutely zero API fees.

Claude Opus 4.6: For tackling complex enterprise orchestration, strict compliance, or deep multi-step reasoning.

If you’re just starting, MiniMax M2.7 and Qwen3.6-Plus may be the best options. They’re cost-effective models, and unlike local models, they don’t require you to provide as much context upfront.

The more you understand the agent’s architecture, the less you need to worry about the model’s benchmarks, because you’ll be able to tweak many things before deciding what to ask it to do.

If Hermes or OpenClaw feels overwhelming, there’s no need to get frustrated. Book a 20-minute consultation, and let’s find a solution together:

I’ve also recently launched an Upwork project where I help people set up a custom OpenClaw or Hermes Agent on a VPS machine. Click here to learn more.